Forecast: Replacing Visual Mapping With The Generative Transformation Process (Ref: dbt)

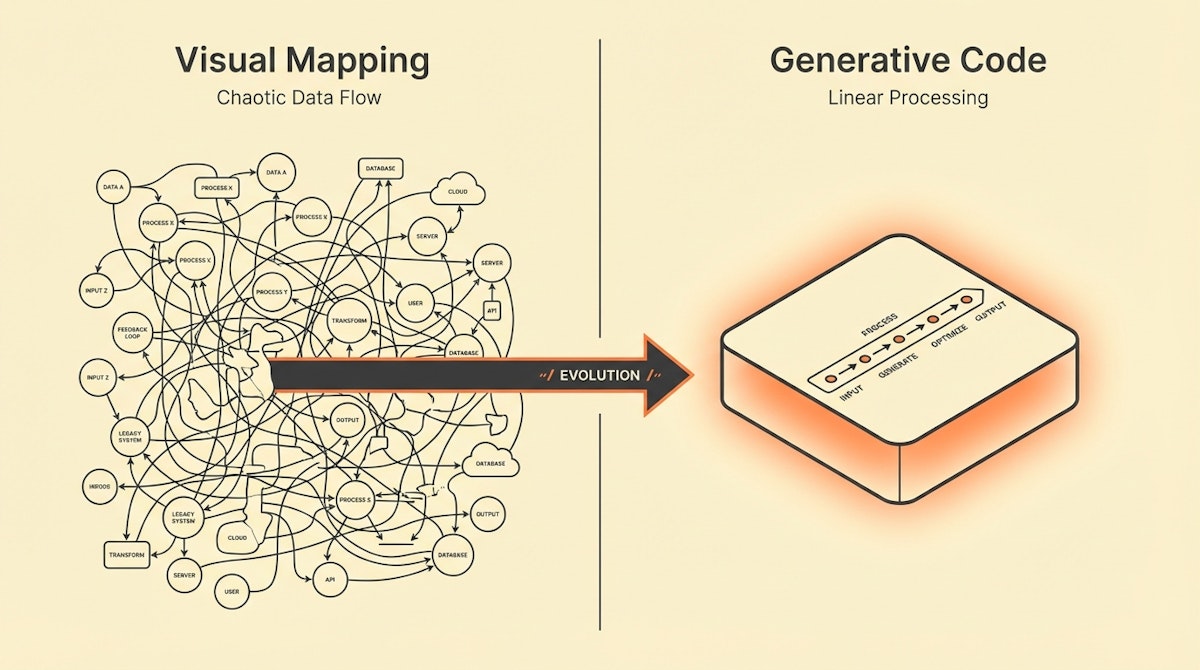

I used to believe that the ultimate goal of no-code automation was to never see a line of code again. I spent hours in Make and Zapier, dragging lines from one bubble to another, manually mapping dozens of fields from a CRM to a database. It felt productive until a schema changed, or a data array arrived in an unexpected format, and my beautiful visual flow turned into a nightmare of error handling.

As a Data Analyst, you likely spend a significant portion of your week on what I call "data janitorial work." You are mapping columns, formatting dates, and ensuring that First Name in tool A matches given_name in tool B. The current visual paradigm of automation tools—drag-and-drop mapping—was designed to lower the barrier to entry. However, for complex data operations, it has become a constraint.

Here is my forecast: In the next 6-12 months, we will stop manually mapping fields in visual builders. instead, we will shift to what I call the Generative Transformation Process. Driven by the rapidly increasing accuracy of LLMs (like Claude 3.5 Sonnet and GPT-4o) in generating robust Python and Javascript, the "Code Node" will replace the "Formatter" node.

We are moving towards a methodology similar to dbt (data build tool), where transformation is handled as code—clean, versionable, and scalable—but written by AI, not us.

The Constraint: The Fragility of Visual Mapping

Visual mapping works perfectly for simple, linear tasks (e.g., sending a Slack message when a form is filled). But it breaks down when you need to handle data integrity at scale.

If you have ever tried to loop through a nested JSON array in a standard Zapier step, or filter a list based on dynamic criteria in Make using only visual modules, you know the pain. You end up creating "spaghetti automations"—dozens of modules just to reformat a string.

This fragility creates three problems:

- Maintenance Debt: If the input API changes, you have to manually re-click and re-map every field.

- Performance Costs: Visual loops in tools like Make consume operations for every single iteration. Processing 1,000 rows can drain your budget instantly.

- Limited Logic: You are restricted to the pre-built functions provided by the platform.

The Solution: The Generative Transformation Process

This approach leverages the new reality where LLMs are exceptionally good at writing small, self-contained transformation scripts. Instead of visually mapping 50 fields, you pass the entire data payload to an LLM, ask it to write a Python script to transform it to your target schema, and then run that script in a sandboxed code node (available in n8n, Make, and Zapier).

Here is how the shift looks in practice:

| Feature | Visual Field Mapping | Generative Transformation |

|---|---|---|

| Setup Time | High (Manual clicking) | Low (Prompt-driven) |

| Complex Arrays | Difficult (Requires bundles) | Native (Loop in code) |

| Maintenance | Brittle (Field-dependent) | Resilient (Schema-agnostic) |

| Cost efficiency | Low (1 op per loop) | High (1 op per batch) |

Step 1: Schema Extraction

Instead of looking at sample data to map fields one by one, you simply provide the JSON Schema of your input (Source) and your output (Destination).

For a Data Analyst, this is standardizing the input. You don't need to define how to get from A to B yet; you just define what A and B look like.

Step 2: Logic Prompting (The Spec)

This is where the strategy shifts. You stop acting as the "pipe builder" and start acting as the "architect." You write a prompt for the LLM that explains the transformation logic in plain English.

Example Prompt: "Write a Python script for an n8n Code node. Take the input JSON array of orders. For each order, format the date to YYYY-MM-DD. If the 'status' is 'paid', map it to 'Closed Won'. Return a single JSON array ready for a batch SQL insert."

Step 3: The Code Node Execution

The LLM generates the script. You paste this script into the Code Node of your automation tool.

Why this changes everything:

- Batch Processing: The script can process 1,000 rows in a fraction of a second within a single operation step, bypassing the operation limits of visual loops.

- Flexibility: You can use Regex, conditional logic, and math libraries that visual builders don't support.

- Portability: If you move from Zapier to n8n, you can take your Python script with you. You cannot take your visual map.

Integrating the "dbt" Mindset

In the data engineering world, dbt revolutionized analytics by treating data transformation as code (SQL) rather than manual ETL configurations. This allowed for version control, testing, and clearer logic.

The Generative Transformation Process brings this same maturity to operational automation. By shifting logic from the UI (User Interface) to the Code execution layer, we increase the reliability of our pipelines.

I have observed that teams adopting this approach reduce their automation error rates significantly. The LLM acts as the bridge that lowers the technical barrier, allowing analysts who aren't fluent in Python to still utilize the power of code-based transformation.

Conclusion

The constraint of "I don't know how to code" is disappearing. The new constraint is "Can I clearly articulate the data logic?"

For us as Data Analysts, this is a massive win. It moves us away from the tedious work of connecting dots and towards the strategic work of designing data flows. In the coming months, expect to see your "No-Code" tools becoming surprisingly code-heavy—hidden behind the helpful interface of an AI assistant.